This document describes

some basic CPU load testing exercises on three different types of database servers used by the Oracle Service at CERN.

It reports on the tests performed, tools used for data gathering, data analysis, findings, and lessons learned.

Motivations

CPU usage is important

for data processing: We observe that workloads on Oracle database services at

CERN are often CPU-bound. Database workloads for transactional processing perform many random read operations. In the past, this mostly stressed the I/O

subsystem, these days we deploy databases with large buffer caches (400 GB or

more of data block caches) and most operations are CPU bound, reading data from

buffer cache.

Server consolidation,

quality of service and licensing: We deploy on commodity HW considering various

constraints: striking a balance between consolidating workloads and isolating

critical workloads from different users’ communities. Moreover, Oracle

licensing costs, which are proportional to the deployed CPUs, play a key input

in the efforts streamlining the CPU deployments across the DB service.

Description and limitations of the

tests

The tests reported

here are extremely limited in scope, as they focus only on CPU performance and

with two specific and “narrow” workloads. However, I believe they provide some

indications on the behavior of the server CPU performance and the overall CPU capacity

of the installed servers. The comparison between three different server models

is the original motivation of this work as we wanted to understand how newer

model can be deployed to replace old ones. This work is not a benchmark of the

tested systems.

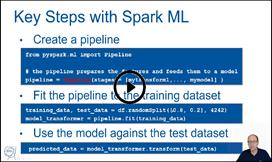

Tools used for load testing

The first workload generator and testing tool is a simple script burning CPU cycles in a loop and executed using multiple workers running in parallel, two implementations have been used, one in Python and one in Rust compiled to binary. Both provide similar results.

The second workload generator is SLOB a tool that runs on top of Oracle databases for testing and specifically stresses “Logical IO”, that is reading blocks from the Oracle buffer cache (memory).

Links to the code,

measured data, and data analyses using notebooks:

Key findings

-

RAC55 is

the newest server model of the three tested and shows the highest CPU per-thread

performance and highest CPU total throughput at saturation.

-

RAC55 has about

2.0x single-thread performance increase compared to RAC52.

-

RAC55 has

about 1.5x single-thread performance increase over RAC54, but this is valid only

for low load, as RAC54 has only 8 physical cores vs 16 cores in RAC55. Moreover, RAC54

provides considerably less total CPU throughput compared to RAC52 and RAC55.

-

RAC55 has

about 2.0x more total CPU throughput at saturation compared to RAC52 despite

having only 16 physical cores compared to 20 physical cores in RAC52.

Description of the platforms

CPU load tests have

been performed on three dedicated test servers representative of the production

database servers in March 2023: RAC52, RAC54, and RAC55.

The servers were

installed with RHEL 7.9 and Oracle tests used Oracle 19c (v. 19.17). We omit the

configuration of networking and I/O, as not relevant for these tests. We don't report the

exact CPU models in this doc.

RAC52 configuration:

- 20 physical cores (2 sockets, 10 physical cores each), 40 logical cores visible on the OS due to hyperthreading

- CPU nominal frequency: 2.20 GHz

- CPU from 2016, L1 caches: 32K + 32K, L2 cache 256K, L3 cache 25600K

- RAM: DDR4, 512 GB

RAC54 configuration:

- 8 physical cores (2 sockets, 4 physical cores each), 16 logical cores visible on the OS due to hyperthreading

- CPU nominal frequency: 3.80 GHz

- CPU from 2019, L1 caches: 32K + 32K, L2 cache 1024K, L3 cache 16896K

- RAM: DDR4, 768 GB

RAC55 configuration:

- 16 physical cores (2 sockets, 8 physical cores each), 32 logical cores visible on the OS due to hyperthreading

- CPU nominal frequency: 3.7 GHz

- CPU from 2019, L1 caches: 32K + 32K, L2 cache 512K, L3 cache 32768K

- RAM: DDR4, 1 TB

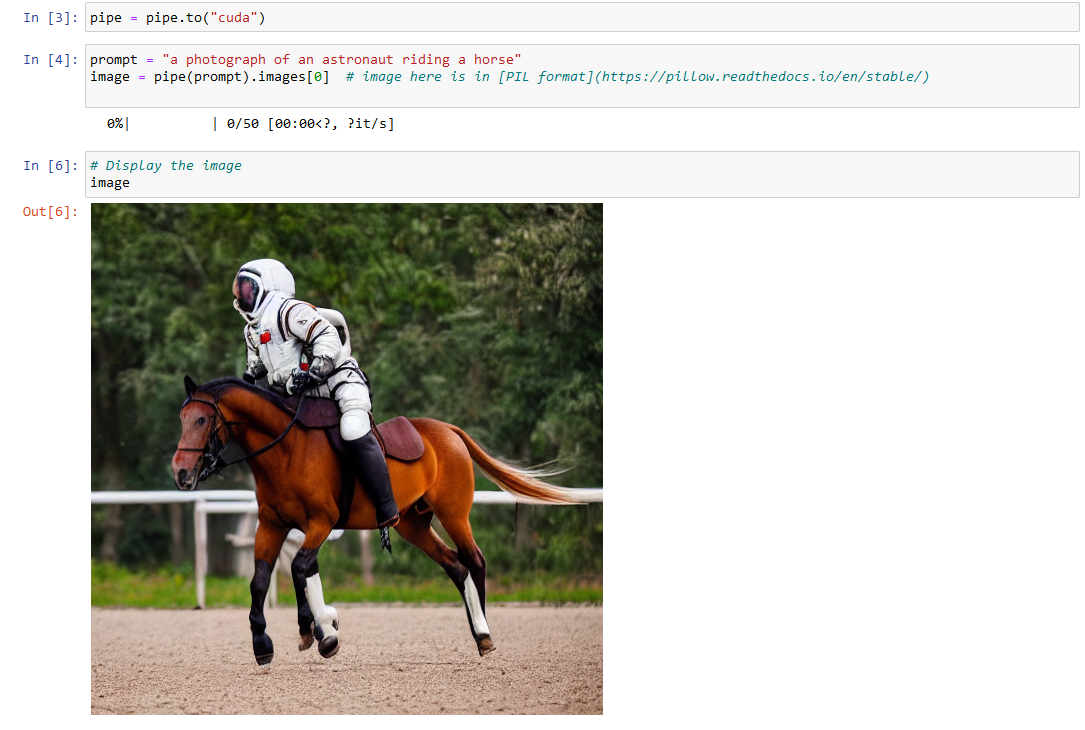

Test 1 – Concurrent workers burning

CPU cycles in a loop and in parallel

The workload generator

and testing tool is a simple Python script burning CPU cycles in a loop.

The script is executed

running on a configurable number of concurrent workers. The script measures the

time spent executing a simple CPU-burning loop.

This provides a simple

way to generate CPU load on the system.

Example of how the

data was collected with the testing tool written in Rust and compiled to

binary:

./test_cpu_parallel --num_workers 8 --full --output myout.csv

See the code at this link

The advantage of this

approach is that the testing tool is easy to write and can be easily automated.

The weak point of

testing this way is that the test workload is somewhat “artificial” and

disconnected with the server actual purpose as a DB server. For example, the CPU-burning

loop used for this test is mostly instruction-intensive on the CPU and does not

spend much time on memory access.

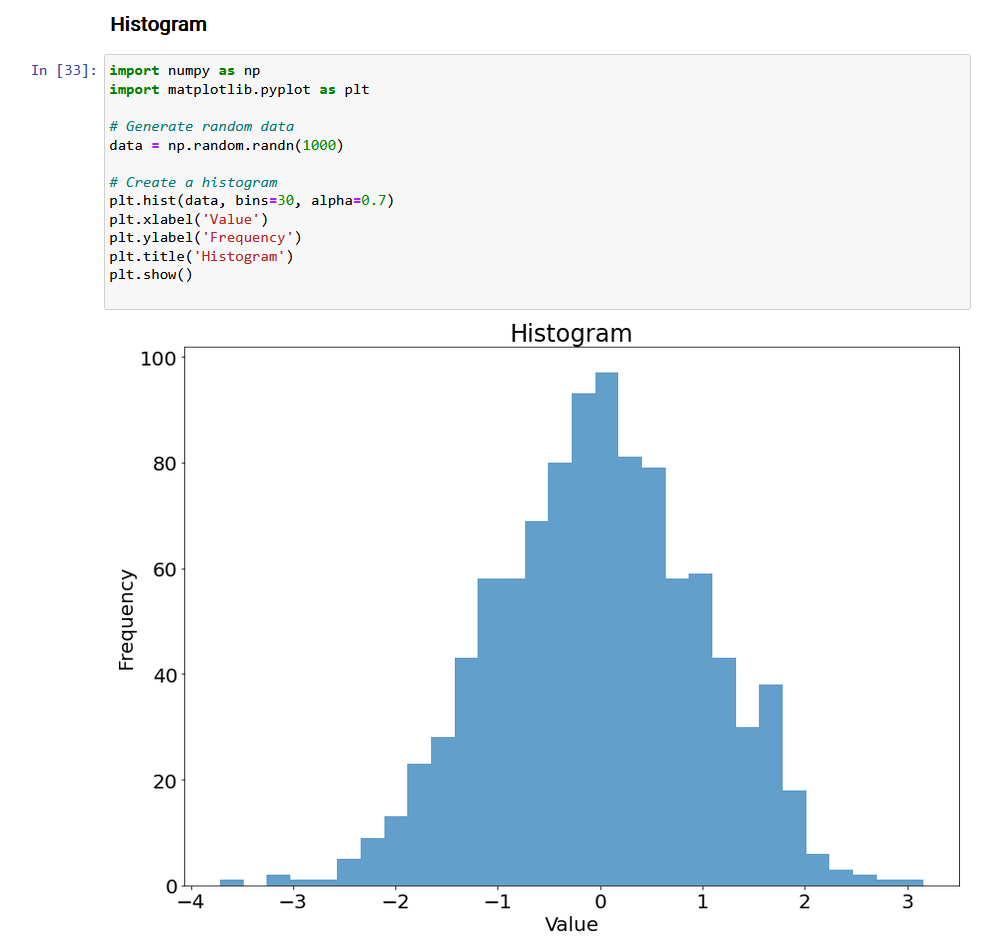

Measurements and results:

See also Data and Notebooks at this link

The following figures

represent the same data in different ways to highlight different performance

and scalability characteristics.

Figure 1 – Raw data

-

The figure

reports the testing job execution time, measured for varying server load on the

three tested servers.

-

A common

pattern is that at low load (see data with just a few parallel workers) the job

run time is almost constant.

-

An

important difference is that the job run time is different on the different

platforms, in order of increasing performance: RAC52, RAC54, RAC55 (the newest

server and the fastest).

-

Another

pattern is that the job running starts to increase linearly at higher load.

-

The job

execution time curve starts to bend upwards as the load increases. Typically, we see

this happening when the num of parallel workers is greater than the number of

physical cores on the server (20 cores on RAC52, 8 cores on RAC54 and 16 cores

on RAC55)

Figure 2 - Speed

-

This plot

reports the number of jobs per minute per worker

-

Data points

can be interpreted as a measure of the “speed of the CPU” for a new job coming

into the system given a defined system load

-

We see

that the “effective CPU speed” decreases as the load increases, with sudden

changes at the points where the number of parallel workers is equal to the

number of physical cores

-

The CPU

speed per thread is also different depending on the CPU architecture, in order

of increasing performance: RAC52, RAC54, RAC55 (the newest server and the

fastest).

Figure 3 - Capacity

-

This plot

shows the number of jobs executed per minute summed over all the running worker

threads.

-

As the

load increases the server capacity increases, reaching a maximum value at number

of workers = number of logical cores (40 for RAC52, 8 for RAC54, 32 for RAC55)

-

This

allows to compare the “Total CPU capacity” of the three servers. In order of

increasing capacity: lowest capacity with RAC54 (the server with fewer cores), then

RAC52, finally RAC55 has the highest CPU throughput (it’s the newest server)

Figure 4 - Scalability

-

This shows

the speedup, a measure of scalability. For the scope of this plot, speedup is calculated as N * (job execution time at load n) / (job execution time at load

1)

-

We see almost

linear scalability for low loads (up to the number of physical cores), then a

slower increase up to the number of logical cores, and, eventually, the speedup reaches saturation

-

RAC54 and RAC55

appear to scale almost linearly up to the number of physical cores

(respectively 8 and 16)

Notes:

-

Of the

tested servers RAC55 appears the fastest on per-thread CPU performance at low

and high loads and the one with higher CPU capacity.

-

The

difference in performance between RAC52 (oldest) and RAC55 (newest) is roughly

x1.5 in per-CPU thread performance and x2 in overall CPU capacity at high load.

-

RAC54

performs similarly to RAC55 but only at low loads (<= 8 concurrent workers)

Test 2 – Parallel workers running

“SLOB tests”, measuring Oracle logical IO throughput

The second workload

generator is SLOB, a tool by Kevin Closson, that runs on top of Oracle

databases for load testing and specifically stresses Physical and Logical IO.

In the configuration used for these tests we only stressed Logical IO, that is accessing

blocks from the Oracle buffer cache (memory).

The tool creates test

tables on the database and performs block IO reading from the test tables with

a tunable number of concurrent workers.

See also the official SLOB page.

The Oracle database

used for testing was configured with a large SGA (Oracle’s shared memory area),

able to cache the test tables in the

Oracle buffer cache. The test workload was therefore stressing Oracle’s “Logical

IO”, that is the part of the Oracle internal code that takes care of reading data

blocks from the buffer cache (Oracle’s consistent read operations). Minimal

additional operations were performed on the blocks accessed this way by SLOB. Logical

IO is an operation with duration of the order of 1 microsecond during these

tests. Notably, it includes time for Oracle-internals operations and

serialization needed for reading data from the Oracle buffer cache, and finally

the Oracle-internals code for reading the block header. The SLOB Logical IO

test workload does not appear to saturate the CPU-memory channel on the tested

servers (for example on RAC55, using OS tools we could see the CPU-memory

bandwidth being utilized during SLOB logical I/O tests went up to 40 GB/s, while

using memory-intensive load generators the system could scale at least up to 220

GB/s,see also Tools_Linux_Memory_Perf_Measure.

Standard Oracle

instrumentation, notably SQL*Plus (Oracle’ CLI tool) and AWR reports (Oracle’s

performance report), was used to collect the number of Logical I/Os recorded

during the test and for other sanity checks, notably checking that the test

workload was fully reading from memory rather than performing Physical I/O.

SLOB test tables have

been created with size 16 GB per user, each concurrent worker running on a

dedicated user. Tests for load higher than 16 concurrent users have used 8 GB

test tables per user. The Oracle buffer cache for testing was allocated using

Linux large pages, as it is recommended by Oracle, and was 400 GB in size,

therefore large enough to cache all the test data. We took care of caching Oracle tables' data with a couple of executions of the test workload before starting to measure

Logical I/O performance. The fact that Oracle was not performing Physical I/O

during the tests was also double-checked using monitoring tools and by

inspection of the AWR reports.

The advantage of this

approach is that this is stressing the system on a key operation for the Oracle

DB workloads that are CPU-bound: Oracle RDBMS Logical I/O.

These tests take

longer to run the simple “Tests with a CPU-burning script” described above,

moreover they require some Oracle DBA expertise to configure and validate the

test results.

Measurements and results:

The following figures

represent the same data in different ways to highlight different performance

and scalability characteristics.

Data with the graphs on Notebooks at this link

Figure 5 – Raw Data

and Capacity

-

The figure

shows how the cumulative Oracle logical IO throughput increases with the number

of parallel workers for the three servers tested.

-

The common

trend is that the Logical IO throughput increases with load up to the number of

logical CPUs (16 for RAC54, 32 for RAC55, and 40 for RAC52).

-

Measurements

are “noisy” so we should take about 10% as the error margin on the collected data

points.

-

There are

differences in performance and total throughput with RAC55 being the most

performance and with the highest throughput.

-

At low load,

(<= 8 concurrent processes) RAC54 and RAC55 have similar performance.

-

At high

load, RAC55 has about 20% more capacity/throughput of Logical IO than RAC52.

Figure 6 - Speed

-

The figure

shows Oracle logical IO throughput per worker as function of load.

-

The

performance of logical IOs decays with increasing load.

-

Logical IO

performance appears close to constant up to the number of physical cores of the

server (8 for RAC54, 16 for RAC55 and 20 for RAC52) and then decays for higher

load, saturating when the number of logical cores is reached.

-

Measurements

are “noisy” so we should take about 10% as the error margin on the collected data

points.

-

RAC55 shows

the highest performance overall for Logical I/O throughput.

-

At load below

16 concurrent workers, RAC55 appears 1.5x faster than RAC52, the gap closes to

about 20% at high load.

Figure 7 - Scalability

-

The figure

shows speedup as function of load.

-

The figure

shows the speedup, a measure of scalability, for this plot it’s calculated as

the ratio of (cumulative Logical I/O at load n) / (cumulative Logical I/O at

load 1)

o

Linear

scalability would be represented by a line with speedup = number of parallel

workers.

-

A general

trend observed in the data is that the scalability curves start close to the

ideal linear scalability and then bend downwards due to contention.

-

RAC55 has

the better scalability behavior of the three servers tested. At low load (less

than 8 concurrent workers) RAC55 and RAC54 have similar behavior.

Conclusions

This work collects a

few tests and measurements on stress testing and CPU loading on three different

platforms of interest for the CERN Oracle database service.

The tests performed

are narrow in scope, just addressing the CPU load.

Two different testing

tools have been used for these tests: testing with a simple CPU-burning script

loop run in parallel, and testing with an Oracle-specific workload generator

for Logical I/O.

The tools used, as well as the measured data and their analyses, are available at this link.

We find

that the newest server model (RAC55) has the highest CPU per-core performance,

scalability, and overall CPU throughput.

This work has been done in the context of

the CERN databases and analytics services and the ATLAS data engineering

efforts, many thanks to my colleagues for their help and suggestions.